The cinema experience is made more accessible to those with hearing and visual disabilities through the use of specialized sound tracks and visual aids. These same mechanisms may also be used to aid those whose language may be different from that of the production. Accessible sound and visual aids include Hearing-Impaired (HI) and Visually Impaired Narrative (VI-N) sound, as well as Open Subtitles and Captions, Closed Subtitles and Captions, and Sign Language Video. The delivery vehicles for this specialized content is the subject of this chapter.

The cinema experience is made more accessible to those with hearing and visual disabilities through the use of specialized sound tracks and visual aids. These same mechanisms may also be used to aid those whose language may be different from that of the production. Accessible sound and visual aids include Hearing-Impaired (HI) and Visually Impaired Narrative (VI-N) sound, as well as Open Subtitles and Captions, Closed Subtitles and Captions, and Sign Language Video. The delivery vehicles for this specialized content is the subject of this chapter.

Accessibility & The Audio Track File

Hearing-Impaired (HI) Audio

Hearing-Impaired audio dates back to the 70’s when first included as an output in Dolby cinema audio processors. The purpose of the HI channel is to boost dialog over music and sound effects, with the intent of making dialog easier to understand through the use of headphones. A typical listener might suffer from high-frequency loss in hearing, for example, thus benefiting from the boosted dialog. The HI output signal of cinema audio processors is derived from the dialog-dominant Center channel, coupled with a softer mix of Left and Right audio (which predominantly carry music and effects) than what would be heard un-aided in the auditorium. Several commercial methods exist to deliver specialized audio to audience-worn headphones using infrared light or radio waves.

In digital cinema, a different approach to HI audio has been introduced, where the HI signal can be generated in post-production and distributed as a sound channel in the 16-channel MainSound Track File. (The forthcoming Sound section will provide audio channel assignment information.) The same commercial methods as before for delivering HI audio through headphones in the auditorium can be applied.

Some manufacturers choose to support both methods, providing a center-channel dominant, audio-processor-generated mix for HI, as a fallback for when the HI signal is not present in the Composition. Notably, the Composition does not include metadata that instructs the system when to fallback to audio processor-generated HI audio.

Visually-Impaired Narrative (VI-N) Audio

Visually-Impaired Narrative was first introduced to cinema in the 90’s with the DTS cinema sound system for film. The target audience of the VI-N channel will have good hearing, but either no vision, or difficulties with vision. The narrative describes the events taking place on screen to the listener. As with HI, the auditorium delivery mechanism to the headphone is typically infrared light or radio wave. The VI-N signal is distributed as an audio channel in the 16-channel MainSound Track File. (See MainSound Track File.)

Sign Language Video

Sign Language Video was introduced as a recommendation by the Motion Picture Association of America (MPAA) in 2017 to satisfy new regulations introduced in Brazil, and potential regulations forthcoming in other countries. The video channel is encoded as a VP9 bit stream for inclusion in the MainSound Track File. The VP9 video frame rate is set to match that of the Composition. In this manner, the video signal is automatically synchronized with the movie, requiring no external synchronization methods. (The forthcoming Audio section will provide audio channel assignment information.) Sign Language Video is viewed by the audience on a second screen, typically a smart phone.

Timed Text Track Files

Open Subtitles, Closed Subtitles, Open Captions, and Closed Captions are each a type of Timed Text essence, where each type is targeted to a specific audience. Timed Text essence may be included in the Composition as a Timed Text Track File. While the essence is referred to as Timed Text, the actual track files may also reference and carry graphics.

Open Subtitles, Closed Subtitles, Open Captions, and Closed Captions are each a type of Timed Text essence, where each type is targeted to a specific audience. Timed Text essence may be included in the Composition as a Timed Text Track File. While the essence is referred to as Timed Text, the actual track files may also reference and carry graphics.

The essence of Timed Text is defined in SMPTE ST 428-7 DCDM – Subtitle. However, the purpose of the Timed Text Track File is identified in the Composition Playlist (CPL). SMPTE ST 429-7 DCP – Composition Playlist defines the MainSubtitle element for Open Subtitle essence. This definition is extended in SMPTE ST 429-12 DCP – Caption and Closed Subtitle to include the additional elements of ClosedSubtitle, MainCaption, and ClosedCaption.

SMPTE Timed Text essence is wrapped as an MXF track file in accordance with SMPTE ST 429-5 DCP – Timed Text Track File. By doing so, SMPTE Timed Text assets can be encrypted and managed in the same manner as Picture and Sound track files. As explained below, Timed Text essence may have fonts and graphics associated with it. For SMPTE Timed Text, the associated files are wrapped with the essence in the Timed Text Track File. In contrast, Interop Timed Text essence are delivered in the package as an XML file, with the associated font and graphics essence also delivered as separate files.

Open Subtitles and Open Captions

Open Subtitles and Open Captions refer to text superimposed on the picture displayed in the auditorium. As such, they are visible to all members of the audience. Open Subtitles are typically displayed on-screen as text in a language other than the language of the movie’s sound track. The intent of the Open Subtitle is to translate the dialog of the sound track for the audience. Open Captions are also typically displayed on-screen as text, but in the same language as the movie’s sound track, with the intent to convey the dialog and action of the movie to those who are hearing-impaired or deaf. Graphics may also be displayed with on-screen timed text. Open Subtitle track files are identified in the CPL as MainSubtitle, and Open Caption track files are identified as MainCaption. A Composition may contain only one MainSubtitle, or one MainCaption file.

Timed Text is managed differently in SMPTE and Interop DCP. Both packaging methods employ XML for Timed Text essence, but each defines the XML differently. (The difference in Timed Text essence has proven to be one of the stumbling blocks in the adoption of SMPTE DCP.) In addition, Interop delivers Timed Text as an XML file in the Composition package, while SMPTE DCP wraps Timed Text XML essence in MXF. This is further explained below.

Interop Timed Text essence is based on a proprietary format introduced by Texas Instruments called CineCanvas™. The latest CineCanvas documentation is available on the Interop DCP page. The CineCanvas file is in XML format, and included in the Composition as an XML file. Text properties and associated actions include language, font, timing of display, fade up/down properties, and the text position properties of direction, horizontal alignment and position (left, center, right), and vertical alignment and position (top, center, bottom). Image (graphics) properties include timing of display, fade up/down properties, horizontal alignment and position, and vertical alignment and position. Font and graphics files may be included with the distribution.

Timed text essence in SMPTE DCP is defined in SMPTE ST 428-7 DCDM – Subtitle. The SMPTE Subtitle format provides a more sophisticated control of display, placement, and font properties over CineCanvas, and includes 3D text. SMPTE Subtitle also includes Ruby text, small annotations used to guide pronunciation placed alongside logographic characters used in the Chinese, Japanese, and Korean languages. As with CineCanvas, horizontal alignment and position is described in terms of left, center, right, and vertical alignment and position in terms of top, center, bottom. Font and graphics files may be included with the distribution.

Closed Captions and Closed Subtitles

Closed Captions and Closed Subtitles are text-only recitations directed to a personal display, and not visible to the general audience (i.e., off-screen).

SMPTE ST 428-10 DCDM Closed Caption and Closed Subtitle sets forth a set of constraints applied to Timed Text essence for the off-screen applications of Closed Captions and Closed Subtitles. The constraints essentially limit the interpretation of positioning elements in the XML document to the vertical position. The same closed caption and closed subtitle constraints applied to SMPTE off-screen Timed Text may also be applied to CineCanvas™ off-screen applications in Interop distributions. Unlike Open Subtitles and Open Captions, Closed Subtitles and Closed Captions may carry up to six (6) versions of off-screen Timed Text Track Files in one Composition, one language per Track File. It is up to the personal display provider to provide on-demand access to the multiple track versions. The packaging of off-screen Timed Text Track Files deserves special attention, as explained in the Reel Flexibility for Timed Text section.

Interop DCP Closed Caption files are a constrained version of the CineCanvas format. The constraint documentation is available on the Interop DCP page.

Reel Flexibility for Timed Text

Unlike Picture and Sound Track Files, Timed Text Track Files do not require one essence file per Composition Reel. Nor is it required that the timed text essence in a Timed Text Track File pertain only to the time frame of its associated Reel. In other words, timed text essence in a track file may carry content that plays outside of the time period of its Reel. This rule holds for both on-screen and off-screen Timed Text. The reason for why such behavior is called for that Timed Text essence can’t be edited on a scene-by-scene basis as Picture and Sound. As an example, a timed text recitation may need to overlap Reels. Based on this rule, it is possible to have only one Timed Text Track File for any one purpose in a multi-Reel Composition, with no loss of Timed Text in the movie.

Unlike Picture and Sound Track Files, Timed Text Track Files do not require one essence file per Composition Reel. Nor is it required that the timed text essence in a Timed Text Track File pertain only to the time frame of its associated Reel. In other words, timed text essence in a track file may carry content that plays outside of the time period of its Reel. This rule holds for both on-screen and off-screen Timed Text. The reason for why such behavior is called for that Timed Text essence can’t be edited on a scene-by-scene basis as Picture and Sound. As an example, a timed text recitation may need to overlap Reels. Based on this rule, it is possible to have only one Timed Text Track File for any one purpose in a multi-Reel Composition, with no loss of Timed Text in the movie.

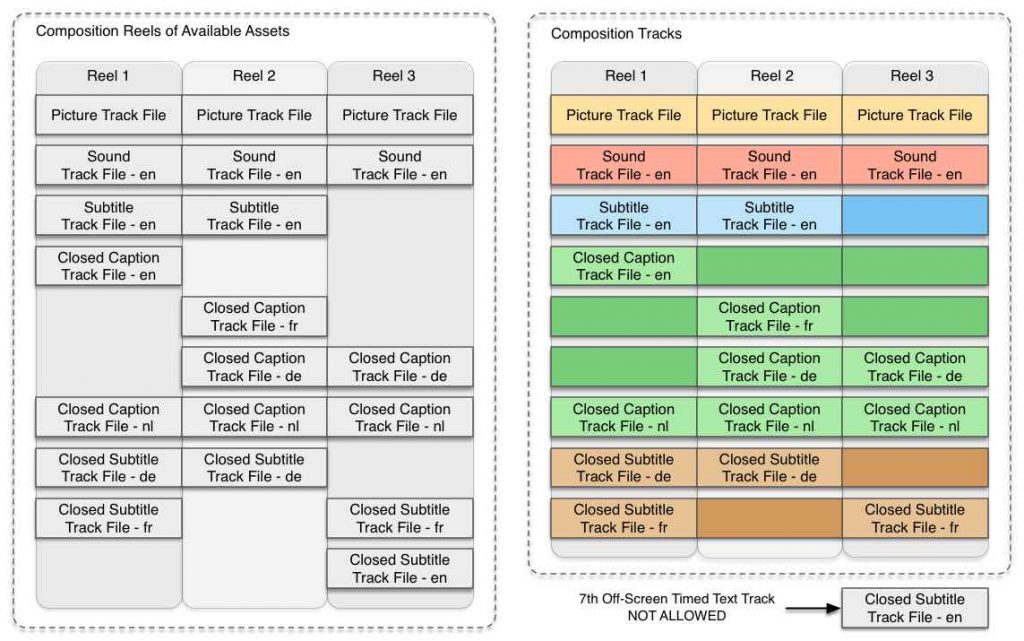

The diagram below illustrates the concept. The set of available assets is shown in the left diagram. The manner in which the assets are handled by the projection system is shown in the right diagram. Languages are labeled as English (en), French (fr), German (de), and Dutch (nl). In the left diagram, Reel 1 has one Picture Track File, one Sound Track File, and one (Open) Subtitle Timed Text Track File. Reel 1 also carries four (4) off-screen Timed Text Track Files. The remaining Reels can be discerned accordingly. However, when assembling the track files for playout, as shown in the right diagram, the system discovers that there are seven (7) different types of off-screen Timed Text Track Files, one more than allowed. Only the first six (6) off-screen Timed Text Track Files will be played. In the case illustrated below, the seventh track file will not be played.

Figure TTR-1. Example of Media Block interpretation of multiple Timed Text Track Files.

Figure TTR-1. Example of Media Block interpretation of multiple Timed Text Track Files.

Communications for Off-Screen Timed Text

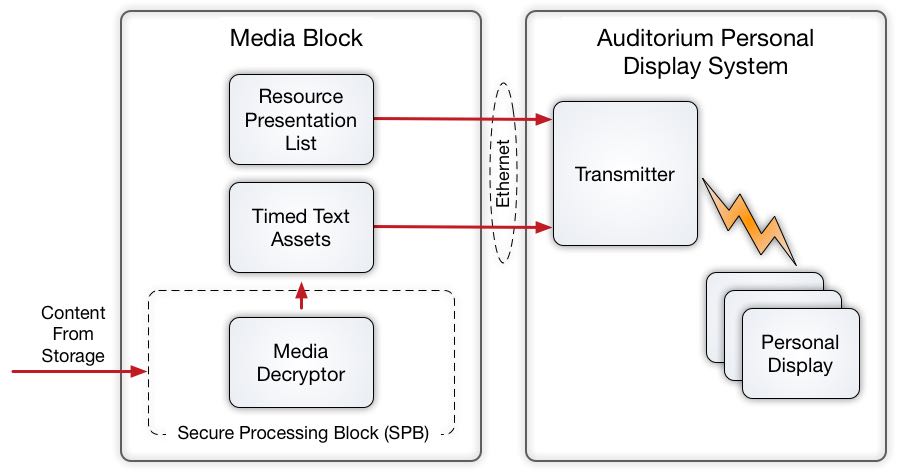

To be useful, off-screen Timed Text (Closed Captions and Closed Subtitles) must be communicated by the Media Block to outboard transmitters and personal display systems. In practice, the transmitter and personal display devices comprise a self-contained commercial system. Such commercial systems receive the off-screen Closed Caption and Closed Subtitle data from the Media Block using a standardized set of protocols over Ethernet.

To be useful, off-screen Timed Text (Closed Captions and Closed Subtitles) must be communicated by the Media Block to outboard transmitters and personal display systems. In practice, the transmitter and personal display devices comprise a self-contained commercial system. Such commercial systems receive the off-screen Closed Caption and Closed Subtitle data from the Media Block using a standardized set of protocols over Ethernet.

Ethernet communications are handled using the SMPTE ST 430-10 DCO – Auxilliary Content Synchronization Protocol. Using the protocol, the Media Block establishes communications with the outboard personal captioning system, and sends an list of XML timed text assets in the format defined by SMPTE ST 430-11 DCO – Auxilliary Resource Presentation List. Following that, the personal captioning system pulls the timed text data from the Media Block as needed, based on timing information supplied by the protocol, and displays the text on the personal display. Unlike Picture and Sound data, DCI security requirements allow decrypted Timed Text Track Files to be stored in the clear in local storage. The diagram below illustrates the process.